The task is episodic, and in order to solve the environment, the agents must get an average score of +0.5 (over 100 consecutive episodes, after taking the maximum over both agents). The environment is considered solved, when the average (over 100 episodes) of those scores is at least +0.5.

To set up your python environment to run the code in this repository, follow the instructions below.

Create (and activate) a new environment with Python 3.6.

-

Create (and activate) a new environment with Python 3.6.

- Linux or Mac:

conda create --name drlnd python=3.6 source activate drlnd- Windows:

conda create --name drlnd python=3.6 activate drlnd

-

Clone the repository (if you haven't already!), and navigate to the

python/folder. Then, install several dependencies.

git clone https://github.com/udacity/deep-reinforcement-learning.git cd deep-reinforcement-learning/python pip install .

- Create an IPython kernel for the

drlndenvironment.

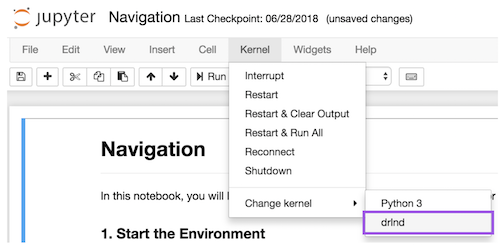

python -m ipykernel install --user --name drlnd --display-name "drlnd"- Before running code in a notebook, change the kernel to match the

drlndenvironment by using the drop-downKernelmenu.

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

Then, place the file in the p3_collab-coompet/ folder in the DRLND GitHub repository, and unzip (or decompress) the file.

(For Windows users) Check out this link if you need help with determining if your computer is running a 32-bit version or 64-bit version of the Windows operating system.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link (version 1) or this link (version 2) to obtain the "headless" version of the environment. You will not be able to watch the agent without enabling a virtual screen, but you will be able to train the agent. (To watch the agent, you should follow the instructions to enable a virtual screen, and then download the environment for the Linux operating system above.)

Place the file in the DRLND GitHub repository, in the p3_collab-compet/ folder, and unzip (or decompress) the file.

Follow the instructions in MADDPG/ and MADDPG_PER/ folders to get started with training the agents agent!

git clone https://github.com/MoraKanHan/Collaboration_Competition_Tennis.git .pip install jupyter

jupyter notebook Tennis.ipynb

jupyter notebook Tennis-MADDPG-PER.ipynb

Follow the instructions in MADDPG/Tennis.ipynb Notebook and MADDPG-PER/Tennis-MADDPG-PER.ipynb Notebook to get started with training the agents agent!

All implementation details and results are found in Report/ folder

- [1]: V. Mnih et al., "Human-level control through deep reinforcement learning", Nature, vol. 518, no. 7540, pp. 529-533, 2015. Available: 10.1038/nature14236 [Accessed 3 September 2021].

- [2]: U. Technologies, "Machine Learning Agents | Unity", Unity, 2021. [Online]. Available: https://unity.com/products/machine-learning-agents. [Accessed: 03- Sep- 2021].

- [3]: R. Sutton and A. Barto, Reinforcement Learning, 2nd ed. 2019.

- [4]: T. P. Lillicrap et al., "Continuous control with deep reinforcement learning", arXiv, vol. 150902971, 2015. [Accessed 4 October 2021].

- [5]: R. Lowe et al. , "Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments", arXiv, vol. 170602275, 2017. [Accessed 24 October 2021].

- [6]: T. Schaul, J. Quan, I. Antonoglou and D. Silver, "Prioritized Experience Replay", arXiv, vol. 151105952, 2016. [Accessed 4 October 2021].

The contents of this repository are covered under the MIT License.