A tiny framework to perform adversarial validation of your training and test data.

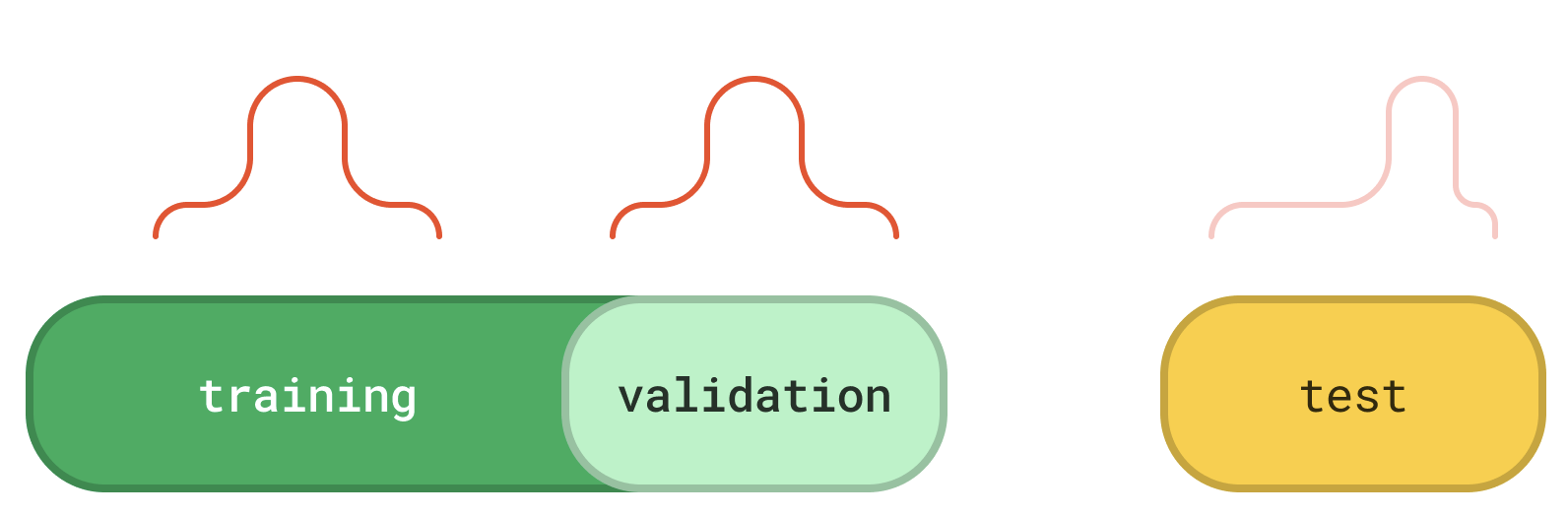

What is adversarial validation? A common workflow in machine learning projects (especially in Kaggle competitions) is:

- train your ML model in a training dataset.

- tune and validate your ML model in a validation dataset (typically is a discrete fraction of the training dataset).

- finally, assess the actual generalization ability of your ML model in a “held-out” test dataset.

This strategy is widely accepted, but it heavily relies on the assumption that the training and test datasets are drawn from the same underlying distribution. This is often referred to as the “identically distributed” property in the literature.

This package helps you easily assert whether the "identically distributed" property holds true for your training and test datasets or equivalently whether your validation dataset is a good proxy for your model's performance on the unseen test instances.

If you are a person of details, feel free to take a deep dive to the following companion article:

adversarial validation: can i trust my validation dataset?

The recommended installation is via pip:

pip install advertion(advertion stands for adversarial validation)

from advertion import validate

train = pd.read_csv("...")

test = pd.read_csv("...")

validate(

trainset=train,

testset=test,

target="label",

)

# // {

# // "datasets_follow_same_distribution": True,

# // 'mean_roc_auc': 0.5021320833333334,

# // "adversarial_features': ['id'],

# // }If you wish to contribute, this is a great place to start!

Distributed under the Apache License 2.0.